- Alberduris

- Posts

- RLM, recursion, or just a pause mechanism for Agents? State of self-messaging

RLM, recursion, or just a pause mechanism for Agents? State of self-messaging

How the difference between `end_turn` and `tool_use` can give Claude Code agents something close to an internal monologue.

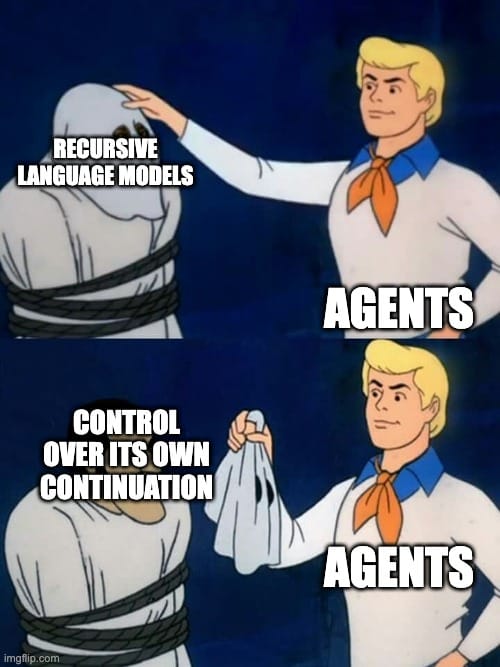

The hot topic this week seems to be recursive agents. ypi, for example, is a recursive agent built on Pi that implements the Recursive Language Models (RLM) pattern. The idea comes from a December 2025 MIT paper and boils down to one primitive, giving the agent an llm_query() function that lets it invoke itself recursively, decompose problems into sub-problems, and solve them through self-delegation. The concept is elegant, and the tweets came with the usual magic words ("early sparks of AGI", "wouldn't be surprised if all major agent harnesses adopt RLM"). None of this sounded like something Claude Code couldn't already do, so I looked into whether anyone had ported it, and whether what was underneath was real.

Turns out there are at least half a dozen repos that claim to bring RLM to Claude Code. They have ambitious names and longer READMEs, but when you look at what they actually do, most of them are wrappers around Claude Code's stock subagent system with some extra scaffolding on top. All interesting things in isolation, but none of them are recursion. Claude Code's subagents cannot spawn other subagents; it's one level deep by design. So by definition, you can't build real RLM on top of it. What these repos actually do is dress up the existing delegation system with a new label.

But wait! None of this is necessary, though.

Claude Code already has true recursion out of the box. From any normal session, the agent can run claude -p "your prompt" through the Bash tool, and that child process is a full Claude Code instance with all tools available, including Bash, which means it can call claude -p again. This is the same architecture that makes ypi work (OS-level child processes rather than sandboxed subagents), except there's nothing to install. So the prediction that "all major agent harnesses will adopt RLM in the next few months" is a bit awkward, because there's nothing to adopt. The primitive is already there; and as usual, it's just a shell command.

But once you've established that recursion is already there and that the RLM repos are mostly packaging, the more interesting question becomes what you'd actually want that isn't already built in. And the answer, at least for me, wasn't recursion at all.

Yes, my fellow reader. You've been tricked into reading a post about Recursive Language Models that is really about something else entirely. Giving agents agency over their own discontinuous nature.

Recursion is about spawning children; the agent delegates downward and collects results. What I found more intriguing was something like an internal monologue, where the agent can finish a stretch of work, pause, write itself a continuation vector, and then pick back up with in the same session with all context preserved.

If this reminds you of the Ralph Wiggum loop, read this. The Ralph Wiggum loop puts the agent inside a while true that restarts it with the same prompt every time it stops. Each iteration is a new session with a blank context; the agent doesn't even know it's in a loop, and the state survives only through files on disk and git. It works through sheer persistence and repetition.

What I'm describing here goes in the opposite direction. The agent decides when to pause, writes itself a message about what to try next, and picks back up in the same session. They're complementary patterns, and they aim to solve different problems.

Agents can stop, but they can't pause

An LLM's output is a continuous stream that ends when it stops generating. There's no intermediate state between "producing tokens" and "done".

An "agent" is just an LLM inside an agentic loop. The user sends a message, the agent emits text alongside optional tool calls for as long as it needs, and eventually (when it emits a text block without any further tool calls) it stops. That full cycle is a "multi-turn" agentic loop.

Within the agentic loop, the agent can use tools for as long as it “wants”,1 but its “reasoning frame” doesn't change; and this is compounded by something deeper, as LLMs are trained through RLHF to finish. To work through a problem, produce a final response and stop. You can fight that with prompting up to a point, but you're pushing against the hardwiring.

So agents can stop, but they can't pause. There's no equivalent of stepping back mid-task and going "aight, let me pause a bit and continue" (apart from the interleaved thinking). They can keep going (thinking), or just finish; and then they need you to say something before they can start again.

Now, you might look at this and think the whole thing is bullshit because the model already "stops" after every generation. And technically, that's true. Every single message from an LLM is a separate forward pass over the full conversation history. The model is fed with the context, and produces an output. Whether it made a tool call or finished its loop, the mechanics are the same.

But the conversation history isn't the same in both cases. When the model makes a tool call, the last thing it wrote was a “request for information”. It was, in RLHF terms, trained to continue after receiving tool results. The tokens at the tail of the history say "I need something" to keep going. When the model finishes a turn with no tool calls, the last thing it wrote was a terminal text. The tokens at the tail say "I'm done". These are two different conversational states that the model was post-trained to handle differently, and the next generation is heavily influenced depending on which one it follows (at the end of the day, an LLM is just an autoregressive next-token predictor 😉).

The self-message mechanism exploits exactly this difference. The agent writes its continuation message through a normal tool call, then finishes its turn with no further tool calls, triggering end_turn. The Stop hook catches that end_turn and feeds the message back. A new set of turns starts from there, but from a concluded state rather than a continuing one.

Note that this is not the same as the agent echo-ing itself mid-turn and continuing; the model genuinely finished, and was restarted. An agentic loop boundary created from the inside, without the user having to intervene. Agency over their own continuation.

To be clear, I'm not claiming that this "unlocks AGI", and it doesn't mean agents can now "work continuously over multi-day horizons" or anything like that. If anything, the practical capabilities it offers right now are modest.

The uncertain part is that the self-message arrives as system-level hook feedback, not as a regular user message (due to limitations of Claude Code). Whether that carries the same weight in the model's next generation is an open question. It might, it might not (and I suspect not), but that's as far as we can go within Claude Code's current architecture.

How it works

You can implement this however you want and there are probably dozens of valid approaches. There's no magic here, and the specifics don't matter much. In this case, I went with three components that fit together in about fifty lines of code.

The first is a small shell script called self-message.sh that the agent calls when it wants to send itself a message. The agent doesn't know or care what happens inside; it just passes a string. The script writes that string to a temp file and exits.

The second is the Stop hook described above, which checks for that file on every end_turn and, if it finds one, blocks the stop and feeds the content back.

The third is a Skill, which just teaches the agent that the mechanism exists.

The agent never sees the temp file, the hook, or any of the plumbing. From its perspective, it calls a function with a message, finishes its turn, and then receives that message back as context for a fresh agentic loop.

Where this breaks down

Right now this is more of a toy than a useful tool. The current generation of LLMs has real limitations that compound quickly in autonomous loops. Slop accumulates, and without a human in the loop to course-correct, self-messaging can amplify drift just as easily as it can fix it. The same problem that plagues the Ralph Wiggum loop, agent teams, and every other "let it run autonomously" approach applies here too. Errors compound like mutations in DNA or bit rot in storage systems.

Context windows make this worse. Opus 4.6, for example, produces adequate reasoning within roughly the first 100k tokens. Beyond that, quality degrades noticeably. A self-messaging agent burning through context with each phase will hit that ceiling fast.

I think this becomes genuinely useful when the models get better. It feels a bit like tool calling did in its early days, when the capability existed before the models could really take advantage of it.

Self-messaging, RLM, and autonomous agent work in general all still need models that generate less slop, that maintain coherence over longer contexts, and that can actually produce calibrated self-assessments rather than the kind of confident-but-wrong metacognition that current models tend toward. We're not there yet, but we'll probably be there sooner than expected; and the build is already here.

Try it yourself

The self-message mechanism is available as an open-source Claude Code plugin. Install it via the plugin system and the Stop hook registers automatically:

/plugin marketplace add alberduris/claude-code-marketplace

/plugin install self-message@alberduris-marketplaceOr install it manually by cloning the repo and following the setup instructions.

If you want to reinforce that your agent uses self-messaging proactively, add this line to your CLAUDE.md:

When facing complex tasks, approach changes, or mid-task reframing needs, invoke the self-message skill to send yourself a continuation message.

The default depth limit is 200 consecutive self-messages, which covers roughly 8-12 hours of autonomous work at average turn lengths. I don't recommend this, and I don't think it's practical yet, but it makes for a cool experiment if you want to burn some tokens and tweet about it. It's configurable via the SELF_MSG_MAX_DEPTH environment variable.

1 The LLM doesn't "want" anything; how long it goes depends almost entirely on the post-training procedure, and it responds primarily to perceived value optimization and computational cost criteria.